- Generating images with ComfyUI and Z Image Turbo

- Automating Workflows with n8n and Local LLMs

- Fine-tune LLMs with PyTorch and AMD ROCm™ Software

- How to Chat with LLMs in Open WebUI

- Local LLM Coding with VS Code and Qwen3-Coder

- Optimized LLMs Fine-tuning with Unsloth

- Running and serving LLMs with LM Studio

- Running LLMs with PyTorch and AMD ROCm™ software

- Speech-to-Speech Translation

- Using Lemonade Across CPU, GPU, and NPU

Optimized LLMs Fine-tuning with Unsloth

Use Unsloth for memory-efficient fine-tuned LLMs™

Overview

This playbook shows how to fine-tune a language model locally with Unsloth on AMD hardware.

It uses a short Supervised Fine-Tuning (SFT) example with LoRA adapters on unsloth/gemma-4-E4B-it, using a subset of the mlabonne/FineTome-100k dataset. The goal is to give you a simple end-to-end workflow that covers setup, training, inference, and saving the fine-tuned result.

The example is designed to be practical and easy to modify, so you can use it as a starting point for your own datasets and models.

What You’ll Learn

- How to set up the Unsloth environment

- How to fine-tune a LLM using SFT with Unsloth

- How to save the fine-tuned result in local storage

Why Unsloth?

Unsloth makes LLM fine-tuning easier to run on local hardware by reducing memory usage and speeding up training compared to a standard setup.

In this playbook, we use Unsloth together with LoRA-based SFT. That means the base model stays mostly frozen, while a much smaller set of adapter weights is trained. This is a good fit for local development because it is lighter than full fine-tuning and faster to iterate on.

Unsloth also supports other training approaches, including QLoRA and reinforcement learning workflows. This playbook focuses on the simplest path first: a small LoRA fine-tuning example that users can run, understand, and extend.

Set up your environment

Open a terminal and run the following prompt to create a venv with AMD ROCm™ software and Pytorch already installed:

sudo apt updatesudo apt install -y python3-venvpython3 -m venv unsloth-env --system-site-packagessource unsloth-env/bin/activateOpen a terminal and run the following prompt to create a venv:

sudo apt updatesudo apt install -y python3-venvpython3 -m venv unsloth-envsource unsloth-env/bin/activateInstalling Basic Dependencies

ROCm

Add the current user to the render and video groups.

sudo usermod -a -G render,video $LOGNAMERestart your system to apply the settings.

sudo rebootInstall ROCm in the created virtual environment.

python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx1151/ "rocm[libraries,devel]"python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx1152/ "rocm[libraries,devel]"python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx1150/ "rocm[libraries,devel]"python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx120x-all/ "rocm[libraries,devel]"For further installation help, please see this link.

PyTorch

Install PyTorch with AMD ROCm™ software support in the created virtual environment:

python -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1151/ torch torchvision torchaudiopython -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1152/ torch torchvision torchaudiopython -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1150/ torch torchvision torchaudioSee this link for details.

AMD GPU Driver

Update to the latest AMD GPU driver using AMD Software: Adrenalin Edition™.

- Open

AMD Software: Adrenalin Editionfrom your Start menu or system tray. - Navigate to Driver and Software, click Manage Updates.

- If an update is available, follow the prompts to download and install.

AMD GPU Driver

Download and install the latest AMD GPU driver for Linux:

- Visit the AMD Linux Drivers page.

- Follow the installation instructions provided on the download page.

Additional Dependencies

pip install "unsloth[amd] @ git+https://github.com/unslothai/unsloth.git"pip install --no-deps git+https://github.com/unslothai/unsloth-zoo.gitpip install --no-deps --upgrade timmpip install datasets transformers trlDownload the Unsloth Fine-Tuning Script

Instead of manually executing each step, this playbook provides a clean, end-to-end script here: .

Run the following code to execute the script:

python test_unsloth.pyThe rest of the playbook will conceptually go through each major step of the script.

How It Works

The test_unsloth.py script performs the following steps:

- Load Model: Loads unsloth/gemma-4-E4B-it using FastModel.

- Prepare Data: Standardizes the dataset (e.g., FineTome-100k) and applies the Gemma-4 chat template.

- Apply LoRA: Adds adapters to language, attention, and MLP modules for efficient training.

- Train: Uses SFTTrainer with response-only loss masking.

- Inference: Runs a quick generation test to verify performance.

- Save: Exports LoRA adapters locally.

Key Configuration

You can modify the following constants to customize your run:

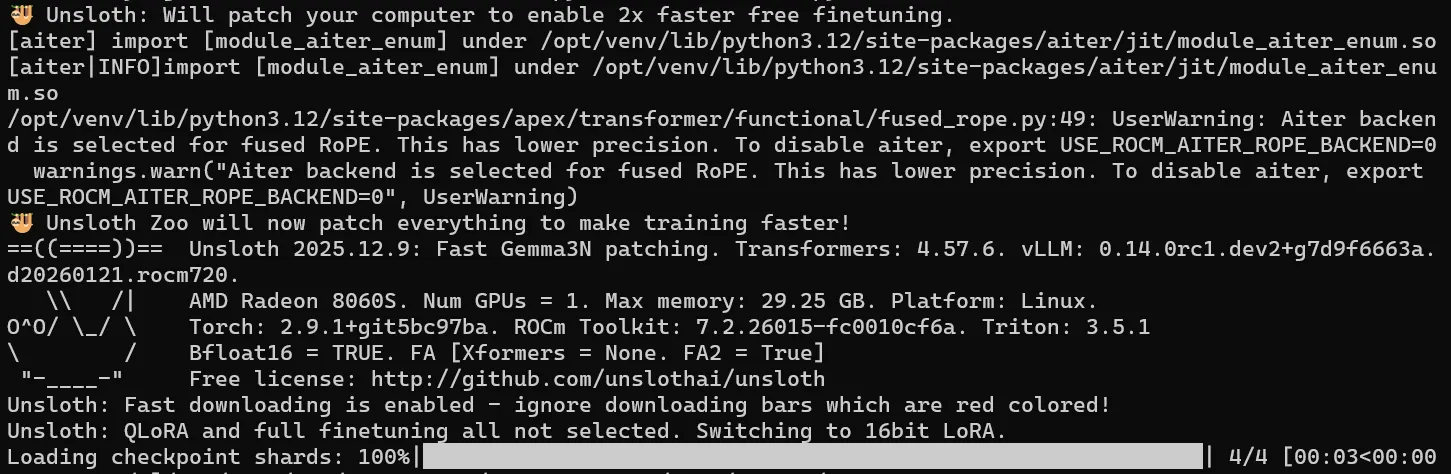

MODEL_NAME = "unsloth/gemma-4-E4B-it"MAX_SEQ_LEN = 1024DATASET_NAME = "mlabonne/FineTome-100k"OUTPUT_DIR = "gemma_4_lora"Example of the Unsloth welcome message and output when loading the model weights:

Prepare Dataset

We use a subset of:

mlabonne/FineTome-100kThe dataset is:

- Converted into chat format

- Processed using the Gemma-4 chat template

- Cleaned to remove duplicate BOS tokens

Train the Model

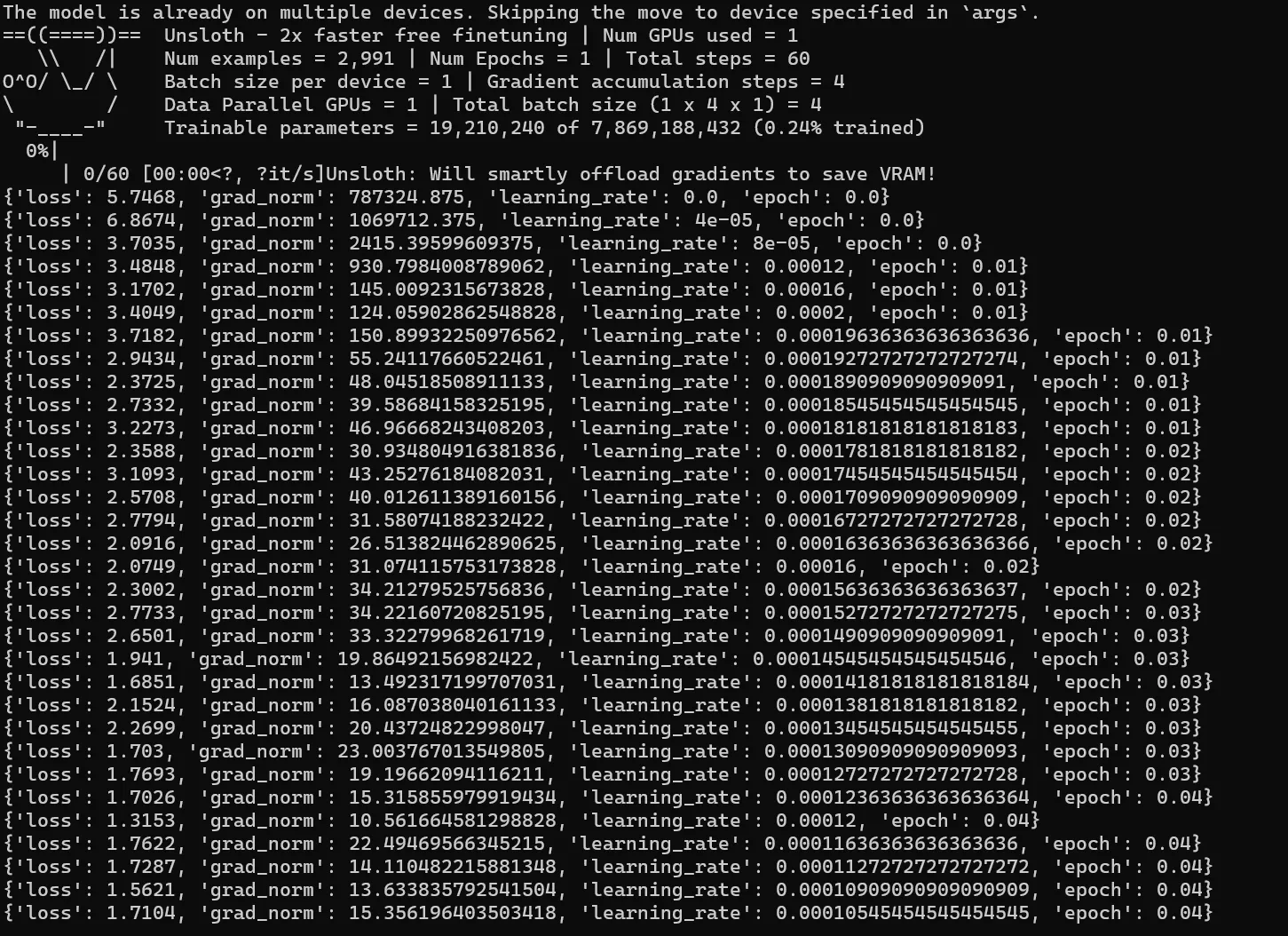

The script runs a short training demo, with the following parameters:

- ~50 steps

- Small batch size

- Gradient accumulation

During training, you will see logs such as:

Saving and Deployment

Local Saving (LoRA)

The script automatically saves LoRA adapters to the OUTPUT_DIR.

model.save_pretrained("gemma_4_lora")tokenizer.save_pretrained("gemma_4_lora")Save merged model (for vLLM)

For deployment with vLLM, merge the adapters into a full model:

model.save_pretrained_merged("gemma-4-finetune", tokenizer)Export GGUF (for llama.cpp)

Convert directly to GGUF for local inference:

model.save_pretrained_gguf("gemma_4_finetune", tokenizer, quantization_method="Q8_0")Explore Lower-Memory (4-bit) Fine-Tuning

This playbook uses standard LoRA fine-tuning. If you need lower memory usage with minimal quality loss, a natural next step is to explore QLoRA with a supported 4-bit model and runtime stack.

QLoRA keeps the same adapter-based training idea as LoRA, but uses a quantized 4-bit base model underneath. This can reduce memory usage further, but compatibility depends on the model, backend, and low-bit kernel support in the software stack.

Before switching to QLoRA, verify that your chosen model and your AMD hardware/software environment support the required quantized runtime path.

An example on how you can enable 4-bit quantization by using a 4-bit quantized model:

load_in_4bit = Truemodel_name = "unsloth/gemma-4-E4B-it-unsloth-bnb-4bit"Next Steps

- Train on your own specific datasets

- Try finetuning with different hyperparameters

- Experiment with different quantization levels to understand the tradeoff between memory usage and quality

- Deploy with vLLM or llama.cpp

- Try QLoRA for a lower-memory setup

Resources

Below are some additional resources to learn more about Unsloth and finetuning:

Need help with this playbook?

Run into an issue or have a question? Open a GitHub issue and our team will take a look.