- Generating images with ComfyUI and Z Image Turbo

- Automating Workflows with n8n and Local LLMs

- Fine-tune LLMs with PyTorch and AMD ROCm™ Software

- How to Chat with LLMs in Open WebUI

- Local LLM Coding with VS Code and Qwen3-Coder

- Optimized LLMs Fine-tuning with Unsloth

- Running and serving LLMs with LM Studio

- Running LLMs with PyTorch and AMD ROCm™ software

- Speech-to-Speech Translation

- Using Lemonade Across CPU, GPU, and NPU

Speech-to-Speech Translation

Build a real-time speech-to-speech translation on your local hardware.

Overview

The AMD ROCm™ software and PyTorch stack create a unified ecosystem for on-device AI. It works for both Windows and Linux with official support for a wide range of devices including Ryzen™ AI APUs and Radeon™ GPUs.

This playbook will teach you how to run low-latency, expressive, and private speech-to-speech translation entirely on the edge.

What You’ll Learn

- How to set up speech-to-speech environment

- How to write Python code to load and use speech-speech models

- How to run and experiment with the Gradio UI

Why use real-time speech-to-speech translation?

- Removes friction between translation and language barriers

- Conveys tone, emotion, and intent without awkward pauses

- Enables global collaboration and faster decision-making

Setting Up Your Environment

Create a Virtual Environment

On Windows, open a terminal in the directory of your choice and follow the commands to create a venv with ROCm+Pytorch already installed:

python -m venv s2st-env --system-site-packagess2st-env\Scripts\activateOn Linux, open a terminal and run the following prompt to create a venv with ROCm+Pytorch already installed:

sudo apt updatesudo apt install -y python3-venvpython3 -m venv s2st-env --system-site-packagessource s2st-env/bin/activateOn Windows, open a terminal in the directory of your choice and follow the commands to create a venv:

python -m venv s2st-envs2st-env\Scripts\activateOn Linux, open a terminal and run the following prompt to create a venv:

sudo apt updatesudo apt install -y python3-venvpython3 -m venv s2st-envsource s2st-env/bin/activateInstalling Basic Dependencies

ROCm

Add the current user to the render and video groups.

sudo usermod -a -G render,video $LOGNAMERestart your system to apply the settings.

sudo rebootInstall ROCm in the created virtual environment.

python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx1151/ "rocm[libraries,devel]"python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx1152/ "rocm[libraries,devel]"python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx1150/ "rocm[libraries,devel]"python -m pip install --upgrade pippython -m pip install --index-url https://repo.amd.com/rocm/whl/gfx120x-all/ "rocm[libraries,devel]"For further installation help, please see this link.

PyTorch

Install PyTorch with AMD ROCm™ software support in the created virtual environment:

python -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1151/ torch torchvision torchaudiopython -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1152/ torch torchvision torchaudiopython -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1150/ torch torchvision torchaudioSee this link for details.

Additional Dependencies

Install m4t dependencies using pip:

pip install transformers==4.57.1 safetensors==0.6.2 tiktoken==0.9.0 accelerate soundfile==0.13.1 sentencepiece protobuf gradio scipy==1.15.3Set up the speech-to-speech demo

Learn about seamless-m4t-v2

Check out the model card on Hugging Face for more information.

This is the technical architecture of the speech-speech models:

Download Scripts

This playbook includes ready-to-use scripts. Please download all of them to the same directory as the environment you created.

| Script | Description | Usage |

|---|---|---|

| Basic LLM text generation | python infer.py | |

| input1.wav | Example Audio file | N/A |

| Language Support File | N/A | |

| Intuitive UI for Speech Translation | python gradio_demo.py --no-share |

Starting with infer.py

To execute the script, run

python infer.pyExplaining the Code

Snippet 1: Importing the necessary dependencies

import osos.environ["HIP_VISIBLE_DEVICES"] = "0"

import timeimport numpy as npimport scipy.io.wavfileimport soundfile as sfimport torchimport torchaudio

from transformers import AutoProcessor, SeamlessM4Tv2Model

# ============ Configuration ============DEFAULT_TARGET_LANGUAGE = "eng"

INPUT_AUDIO_PATH = "./input1.wav"OUTPUT_AUDIO_PATH = "./out1.wav"

# Automatically downloads + caches via Hugging FaceMODEL_ID = "facebook/seamless-m4t-v2-large"

TARGET_SAMPLE_RATE = 16_000Snippet 2: Loading the models from HuggingFace

This function takes in a model ID and downloads the model if not already downloaded. It then returns the processor and model for the next function to use.

def load_model(model_id: str, device: torch.device): start = time.time()

print("Loading model (downloads automatically on first run)...")

processor = AutoProcessor.from_pretrained(model_id)

dtype = torch.float16 if device.type == "cuda" else torch.float32

model = SeamlessM4Tv2Model.from_pretrained(model_id, torch_dtype=dtype).to(device)

elapsed = time.time() - start print(f"Model loading duration: {elapsed:.2f} seconds")

return processor, modelSnippet 3: Input audio clip .wav file and preprocess it

This function loads the audio clip and resamples it to the target rate.

def preprocess_audio(audio_path: str, target_sr: int = TARGET_SAMPLE_RATE) -> torch.Tensor:

audio_np, orig_freq = sf.read(audio_path, dtype="float32", always_2d=True)

# Convert to tensor [channels, samples] audio = torch.from_numpy(audio_np.T)

# Resample if needed if orig_freq != target_sr: audio = torchaudio.functional.resample(audio, orig_freq=orig_freq, new_freq=target_sr)

# Convert stereo -> mono if audio.shape[0] > 1: audio = torch.mean(audio, dim=0, keepdim=True)

return audioSnippet 4: Run inference

This function runs inference with the model and returns the generated output.

def run_inference(model, processor, audio: torch.Tensor, device: torch.device, target_lang: str = DEFAULT_TARGET_LANGUAGE):

start = time.time()

audio_inputs = processor( audio=audio.squeeze(0).cpu().numpy(), sampling_rate=TARGET_SAMPLE_RATE, return_tensors="pt", )

audio_inputs = { k: v.to(device) if isinstance(v, torch.Tensor) else v for k, v in audio_inputs.items() }

with torch.inference_mode(): output = model.generate(**audio_inputs, tgt_lang=target_lang)[0]

audio_array = output.float().cpu().numpy().squeeze()

elapsed = time.time() - start print(f"Inference duration: {elapsed:.2f} seconds")

return audio_array, elapsedSnippet 5: Save the translated file

This function saves the audio array to a .WAV file.

def save_audio(audio_array: np.ndarray, output_path: str, sample_rate: int): if np.issubdtype(audio_array.dtype, np.floating): max_abs = np.max(np.abs(audio_array)) if audio_array.size else 0.0

if max_abs > 1.0: audio_array = audio_array / max_abs

audio_array = (audio_array * 32767.0).clip(-32768, 32767).astype(np.int16)

scipy.io.wavfile.write(output_path, rate=sample_rate, data=audio_array)

print(f"Output saved to: {output_path}")Running the Gradio UI demo:

Now that you have run a basic script example, the following instructions provide a helpful UI that builds upon the code we have written and makes live speech-speech translation easy.

Run Gradio Locally

python ./gradio_demo.py --no-shareThen, open your web browser at http://127.0.0.1:7860 to access the UI.

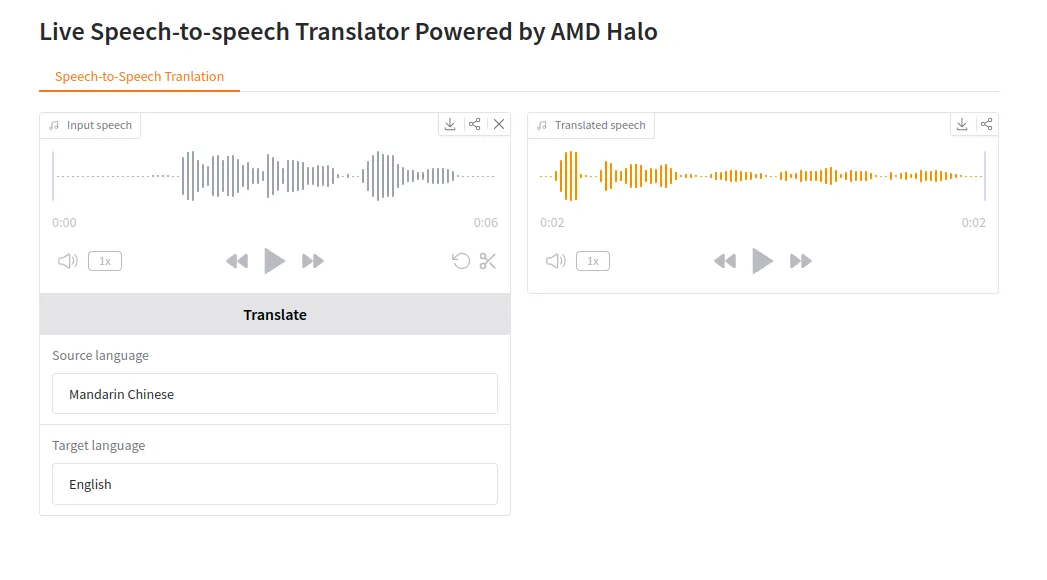

Gradio UI example:

Next Steps

- Mix and match between dozens of languages for quick translation.

- Share your demo with others: Add —share to create a public link that anyone can access remotely, or deploy permanently using Hugging Face Spaces

Resources

Below are some additional resources to learn more about speech-to-speech translation:

- The repo is here https://huggingface.co/facebook/seamless-m4t-v2-large

- Research academia related to “Seamless: Multilingual Expressive and Streaming Speech Translation”

- Gradio sharing and deployment: Sharing Your App Guide and Deploy to Hugging Face Spaces

Need help with this playbook?

Run into an issue or have a question? Open a GitHub issue and our team will take a look.