- Generating images with ComfyUI and Z Image Turbo

- Automating Workflows with n8n and Local LLMs

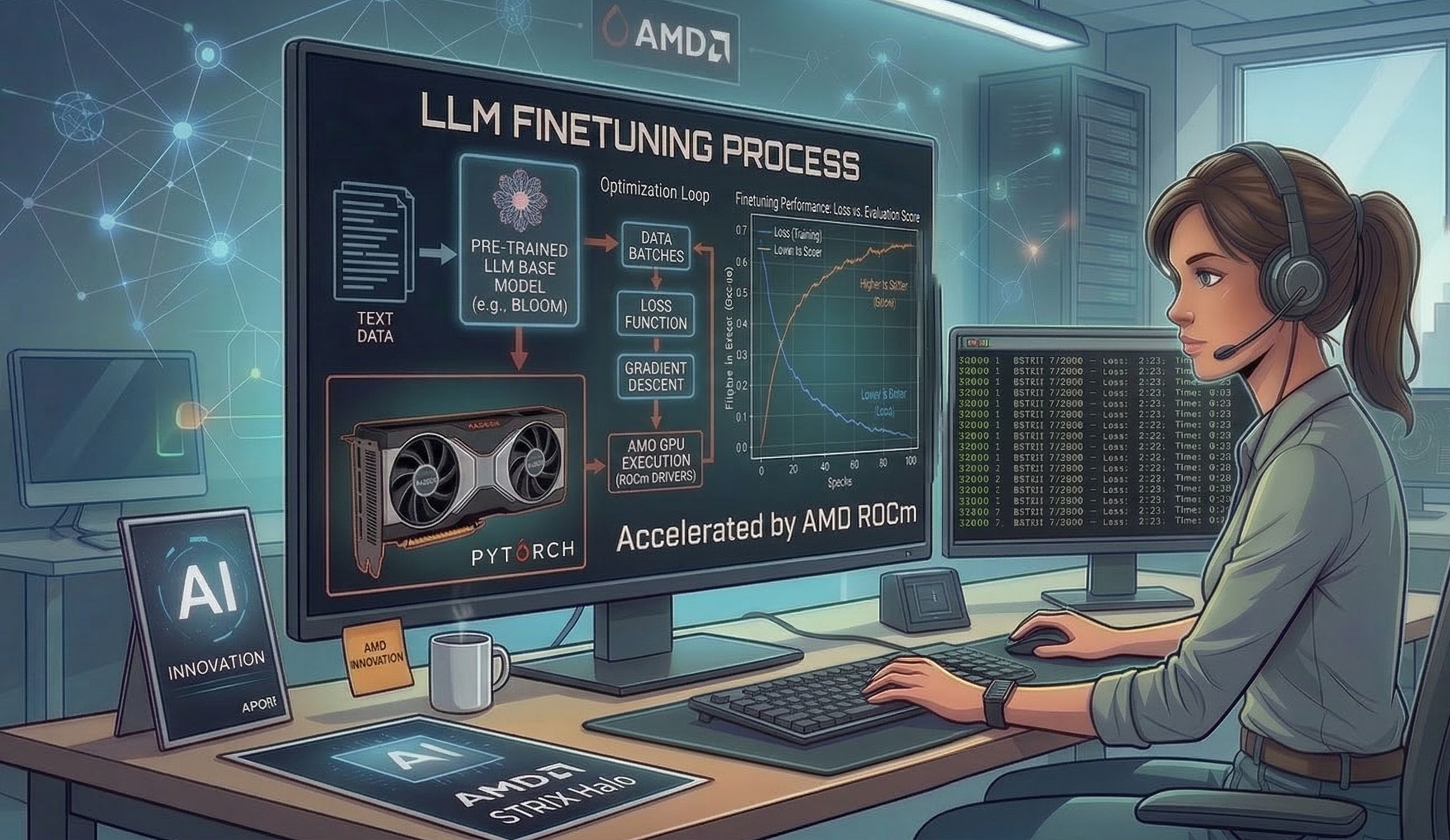

- Fine-tune LLMs with PyTorch and AMD ROCm™ Software

- How to Chat with LLMs in Open WebUI

- Local LLM Coding with VS Code and Qwen3-Coder

- Optimized LLMs Fine-tuning with Unsloth

- Running and serving LLMs with LM Studio

- Running LLMs with PyTorch and AMD ROCm™ software

- Speech-to-Speech Translation

- Using Lemonade Across CPU, GPU, and NPU

Fine-tune LLMs with PyTorch and AMD ROCm™ Software

Fine-tune large language models (LLMs) using PyTorch and ROCm.

Overview

This tutorial provides step-by-step examples for fine-tuning a large language model (LLM) with PyTorch and ROCm. It covers several techniques, from standard fine-tuning to memory-efficient Parameter-Efficient Fine-Tuning (PEFT) strategies, so you can easily adapt models for your needs.

Model Used: google/gemma-3-4b-it (see Enable HF authentication if gated)

Hardware: AMD Radeon™ GPU with ROCm support

Framework: PyTorch + Hugging Face (Transformers, PEFT, Transformer Reinforcement Learning (TRL))

Quick Start

1. Install Dependencies

python -m venv venvvenv\Scripts\activate.batsudo apt updatesudo apt install -y python3-venvpython3 -m venv venvsource venv/bin/activateInstalling Basic Dependencies

PyTorch

Install PyTorch with AMD ROCm™ software support in the created virtual environment:

python -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1151/ torch torchvision torchaudiopython -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1152/ torch torchvision torchaudiopython -m pip install --upgrade pippython -m pip install --force-reinstall --no-cache-dir --index-url https://repo.amd.com/rocm/whl/gfx1150/ torch torchvision torchaudioSee this link for details.

Additional Dependencies

pip install transformers==4.57.1 safetensors==0.6.2 accelerate peft trl bitsandbytes "fsspec[http]>=2023.1.0,<=2025.9.0"Windows: Only core packages are tested and supported here. bitsandbytes is not well supported on Windows, so the Windows install omits it; use LoRA or full fine-tuning on Windows (QLoRA requires bitsandbytes and is intended for Linux).

pip install transformers==4.57.1 safetensors==0.6.2 datasets==4.2.0 accelerate peft trl "fsspec[http]>=2023.1.0,<=2025.9.0"Enable HF authentication (gated or custom / non–preinstalled models)

In this example we use google/gemma-3-4b-it, which is a gated model. You must accept the model’s terms on Hugging Face and then authenticate so the training scripts can download it.

- Accept the license: Open https://huggingface.co/google/gemma-3-4b-it, sign in (or create an account), and accept the license/terms on the model page (e.g. “Agree and access repository”).

- Install and log in: Install the Hugging Face CLI, then run the standard login:

pip install huggingface_hubhf auth login2. Choose Your Method

| Method | Memory | Speed | Quality | Best For |

|---|---|---|---|---|

| QLoRA | 12-16GB | Fastest | 90-95% | Low Memory Usage |

| LoRA | 24-32GB | Fast | 95-98% | Balanced approach |

| Full | 80GB+ | Slowest | 100% | Maximum quality |

3. Run Training

Dataset and what the model learns

The scripts turn the dataset into chat examples. For example, the QLoRA script uses Abirate/english_quotes: each example becomes a user–assistant pair like:

- User: “Give me a quote about: <tag>”

- Assistant: “<quote> – <author>”

Fine-tuning teaches the model to respond to prompts asking for quotes about a topic and to return them in the format <quote text> - <author>. The LoRA and full fine-tuning scripts use databricks/databricks-dolly-15k (general instruction/response pairs), so the exact task varies by script; the idea is the same - adapt the model to your chosen dataset and format.

Below is a summary of the available training methods. Each method links to its script and provides a brief description for choosing the right approach.

| Script | Method | Description | Typical VRAM | Recommended For |

|---|---|---|---|---|

| LoRA | Trains small adapter matrices while freezing base model. 3–5x faster; ~95–98% full quality. | 24–32GB | Advanced users; multiple adapters; more VRAM | |

| (Linux only) | QLoRA | 4-bit quantization + LoRA adapters. Lowest memory use, fastest, small quality trade-off. Requires bitsandbytes (Linux only). | 12–16GB | Most users; fast experiments; limited VRAM |

| Full Fine-tuning | Updates all model parameters. Maximum quality; highest memory and compute usage. | 40GB+ | Maximum quality; research; large VRAM |

Understanding the Techniques

What is LoRA?

LoRA (Low-Rank Adaptation) keeps the base model frozen and only trains small “adapter” matrices that get added to certain layers.

- The key idea: instead of updating a huge weight matrix with millions of parameters, we learn a low-rank update (two small matrices whose product has much fewer parameters). That gives a large reduction in trainable parameters and VRAM while keeping most of the full fine-tuning quality.

# Instead of updating full weight matrix W (16M params):W_updated = W + ΔW

# LoRA decomposes the update into two small matrices:W_updated = W + B × A# B: 4096×32 matrix# A: 32×4096 matrix# Total: 262K params (98% reduction!)What is QLoRA?

QLoRA combines 4-bit quantization with LoRA. The base model is loaded in 4-bit (large memory savings), and only the LoRA adapters are trained in higher precision. So you get the parameter efficiency of LoRA plus much lower VRAM, with a small quality trade-off compared to full-precision LoRA. Note that 4-bit quantization can cause numerical instabilities (loss spikes or NaNs), so users may often prefer LoRA if enough VRAM is available.

Base Model (4-bit): 10GB ← Frozen, quantizedLoRA Adapters (BF16): 2GB ← Trainable, full precisionTotal: 12GB (vs 40GB full precision)Using your Fine-Tuned Model

After Full Fine-Tuning

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained( "output-gemma-3-4b-full", # Directory containing your fully fine-tuned checkpoint device_map="auto", torch_dtype="auto" # Use BF16 if your GPU supports it, else "auto")tokenizer = AutoTokenizer.from_pretrained("output-gemma-3-4b-full")

# Generate textprompt = "Explain quantum computing:"inputs = tokenizer(prompt, return_tensors="pt").to(model.device)outputs = model.generate(**inputs, max_new_tokens=200)print(tokenizer.decode(outputs[0], skip_special_tokens=True))After LoRA/QLoRA Training

from peft import AutoPeftModelForCausalLMfrom transformers import AutoTokenizer

# Load model with LoRA or QLoRA adaptersmodel = AutoPeftModelForCausalLM.from_pretrained( "output-gemma-3-4b-qlora", # or "output-gemma-3-4b-lora" depending on your training device_map="auto", torch_dtype="auto")tokenizer = AutoTokenizer.from_pretrained("output-gemma-3-4b-qlora")

# Generate textprompt = "Explain quantum computing:"inputs = tokenizer(prompt, return_tensors="pt").to(model.device)outputs = model.generate(**inputs, max_new_tokens=200)print(tokenizer.decode(outputs[0], skip_special_tokens=True))Merge LoRA Adapter into Base Model

# Merge LoRA/QLoRA adapter weights into the base model for standalone inferencemerged_model = model.merge_and_unload()merged_model.save_pretrained("gemma-3-4b-merged")tokenizer.save_pretrained("gemma-3-4b-merged")For more custom settings (padding tokens, device, etc), refer to the script that you used for training.

Customization Guide

Use your Own Dataset

All scripts use the same dataset format. Replace the loading section:

from datasets import load_dataset

# Option 1: Local JSON/JSONL filedataset = load_dataset('json', data_files='your_data.json')

# Option 2: Hugging Face Hub datasetdataset = load_dataset('username/dataset-name')

# Option 3: CSV filedataset = load_dataset('csv', data_files='data.csv')

# Format for chat modelsdef format_instruction(example): return { "messages": [ {"role": "user", "content": example['instruction']}, {"role": "assistant", "content": example['response']} ] }

dataset = dataset.map(format_instruction)Dataset Format:

[ { "messages": [ {"role": "user", "content": "Your instruction here"}, {"role": "assistant", "content": "Expected response here"} ] }]Adjust Training Parameters

Edit the training script and change the variables to match your goals: learning rate (LR), epochs (EPOCHS), batch size (BATCH_SIZE), gradient accumulation (GRAD_ACCUM_STEPS), and for LoRA/QLoRA rank (LORA_R). For faster runs use fewer epochs and a higher learning rate (LR); for better quality use more epochs and a lower LR. Reduce batch size or sequence length if you hit out-of-memory errors.

Memory Optimization Tips

If you encounter out-of-memory errors:

1. Reduce Batch Size:

BATCH_SIZE = 1GRAD_ACCUM_STEPS = 16 # Maintain effective batch size2. Reduce Sequence Length:

max_seq_length=256 # Instead of 5123. Use More Aggressive Quantization:

Full → LoRA → QLoRA4. Enable Gradient Checkpointing (Full fine-tuning only):

model.gradient_checkpointing_enable()Monitoring & Debugging

Watch GPU Memory

# Check ROCm GPU statuswatch -n 1 rocm-smi

# Show memory inforocm-smi --showmeminfo vram(Optional) Track Experiments with Weights & Biases

To log runs and metrics to Weights & Biases:

pip install wandbwandb loginIn the training script, set report_to="wandb" and optionally run_name="your-experiment-name" in the trainer config. If you prefer not to use Wandb, leave report_to at its default or set it to "none".

Common Issues

Out of Memory (OOM)

Solution: Reduce batch size and/or use QLoRA

BATCH_SIZE = 1GRAD_ACCUM_STEPS = 16# Or: python train_qlora.pyLoss Not Decreasing

Solution: Adjust learning rate

LR = 1e-4 # Try lower# orLR = 5e-4 # Try higherSlow Training

Solution: Increase batch size if memory allows

BATCH_SIZE = 8Next Steps

After you have completed successful fine-tuning, consider the following next steps to get more from your model:

- Evaluate thoroughly on held-out test data to measure generalization and avoid overfitting.

- Experiment by trying different hyperparameter values for better accuracy, speed, and memory trade-offs.

- Track all your experiments (and corresponding metrics) with Weights & Biases for reproducible research.

- Try training on your own custom datasets to adapt the model specifically for your use-case.

- Deploy your fine-tuned model for fast inference using efficient backends such as vLLM on compatible hardware.

- Explore advanced techniques including prompt engineering, mixed precision, and longer sequence lengths.

- Train multiple LoRA adapters for different tasks or domains and swap them as needed.

Good luck with your fine-tuning journey! 🎉

Need help with this playbook?

Run into an issue or have a question? Open a GitHub issue and our team will take a look.