- Generating images with ComfyUI and Z Image Turbo

- Automating Workflows with n8n and Local LLMs

- Fine-tune LLMs with PyTorch and AMD ROCm™ Software

- How to Chat with LLMs in Open WebUI

- Local LLM Coding with VS Code and Qwen3-Coder

- Optimized LLMs Fine-tuning with Unsloth

- Running and serving LLMs with LM Studio

- Running LLMs with PyTorch and AMD ROCm™ software

- Speech-to-Speech Translation

- Using Lemonade Across CPU, GPU, and NPU

How to Chat with LLMs in Open WebUI

Use Open WebUI to chat with LLMs locally.

Overview

Open WebUI is a self-hosted, browser-based interface that provides a familiar chatbot experience while acting as a frontend for one or more AI model servers. Instead of being tied to one provider, Open WebUI can connect to any backend that exposes an OpenAI-compatible API, so you can swap models and capabilities without switching UIs.

In this playbook, we use Lemonade as the backend because it exposes a unified OpenAI-compatible endpoint supporting multiple modalities:

- Large Language Models (LLMs) for text generation

- Vision models for image understanding

- Stable Diffusion for image generation

- Audio transcription models for speech-to-text

This setup enables you to explore the complete multimodal workflow end-to-end.

Learning Objectives

By the end, you’ll be able to:

- Connect Open WebUI to a local OpenAI-compatible backend (Lemonade)

- Chat with a local LLM from your browser

- Upload an image and ask a vision model questions about it

- Generate images from text prompts using Stable Diffusion models (SD-Turbo / SDXL)

- Understand the mental model so you can use other backends (Ollama, vLLM, llama.cpp server, etc.)

Core Concepts (Mental Model)

The Three Components

| Piece | What it does | Examples |

|---|---|---|

| Frontend (UI) | The web app you interact with | Open WebUI |

| Backend (Model Server) | Hosts models and exposes HTTP endpoints | Lemonade, Ollama, vLLM, llama.cpp server, OpenAI-compatible servers |

| Models | The actual LLM / Vision / Diffusion / Audio models | CodeLlama, DeepSeek, Gemma-MM, SDXL, SD-Turbo, Whisper |

Why “OpenAI-compatible API” matters

Open WebUI is built around standard OpenAI-style endpoints, like:

- Chat:

/chat/completions - Models list:

/models - Image generation:

/images/generations - Audio transcription:

/audio/transcriptions

Lemonade exposes these under http://localhost:13305/api/v1/...

If a backend supports those endpoints, Open WebUI can talk to it with minimal setup. That’s why we can switch backends without changing our workflow.

One-Time Setup

This section establishes a stable local environment: Lemonade running, Open WebUI running, and a working connection between them.

1. Install Lemonade, Start Lemonade Server, and Download Models

Lemonade

Installing Lemonade

Download the latest installer from lemonade-server.ai and run the .msi file.

After installation:

- The

lemonadeCLI is added to your system PATH automatically - Lemonade server is expected to run in the background automatically

You can also install silently from the command line:

msiexec /i lemonade-server-minimal.msi /qnUbuntu:

sudo add-apt-repository ppa:lemonade-team/stablesudo apt install lemonade-serverArch Linux (AUR):

yay -S lemonade-serverFor other distributions or to install from source, see the full installation options.

Verifying Lemonade Installation

Open a terminal and run:

lemonade --versionYou should see output like:

lemonade version x.y.zIf you see a version number, Lemonade is installed correctly and ready to go.

For quick reference, here are common Lemonade CLI commands:

| Command | What it does |

|---|---|

lemonade --help | Shows all available commands and flags. |

lemonade --version | Prints the installed Lemonade version. |

lemonade status | Confirms whether the Lemonade server is running and reachable. The default OpenAI-compatible API base URL is http://localhost:13305/api/v1. |

lemonade list | Lists models available to your Lemonade setup. |

lemonade pull <MODEL_NAME> | Downloads a model without launching it. |

lemonade run <MODEL_NAME> | Downloads the model if needed, then starts it for inference/chat. |

lemonade run <MODEL_NAME> --llamacpp rocm | Starts a llama.cpp model with the ROCm backend. |

lemonade run <MODEL_NAME> --llamacpp vulkan | Starts a llama.cpp model with the Vulkan backend. |

lemonade config | Displays the current Lemonade configuration values. |

lemonade config set llamacpp.backend=rocm | Sets the default llama.cpp backend to ROCm. |

For the latest Lemonade server options or troubleshooting, please refer to the official Lemonade documentation.

- Confirm the API is reachable:

- Open

http://localhost:13305/api/v1/modelsin your web browser. - Download the desired models in Lemonade

- Open

If you don’t see your models in

http://localhost:13305/api/v1/models, Open WebUI won’t be able to select them later.

2. Install Open WebUI

Open PowerShell and create a fresh virtual environment:

# Install open-webui into a venv [Windows]python -m venv openwebui-venv.\openwebui-venv\Scripts\activatepython -m pip install --upgrade pippip install open-webui beautifulsoup4Open a terminal and create a fresh virtual environment:

# Install open-webui into a venv [Linux]python3 -m venv openwebui-venvsource openwebui-venv/bin/activatepython3 -m pip install --upgrade pippip install open-webui beautifulsoup4Tip (Python version): Install Open WebUI using Python 3.12. The

open-webuiPyPI package may not install on Python 3.13+ (you’ll see “No matching distribution found”). Note: Open WebUI also provides a variety of other installation options, such as Docker, on their GitHub.

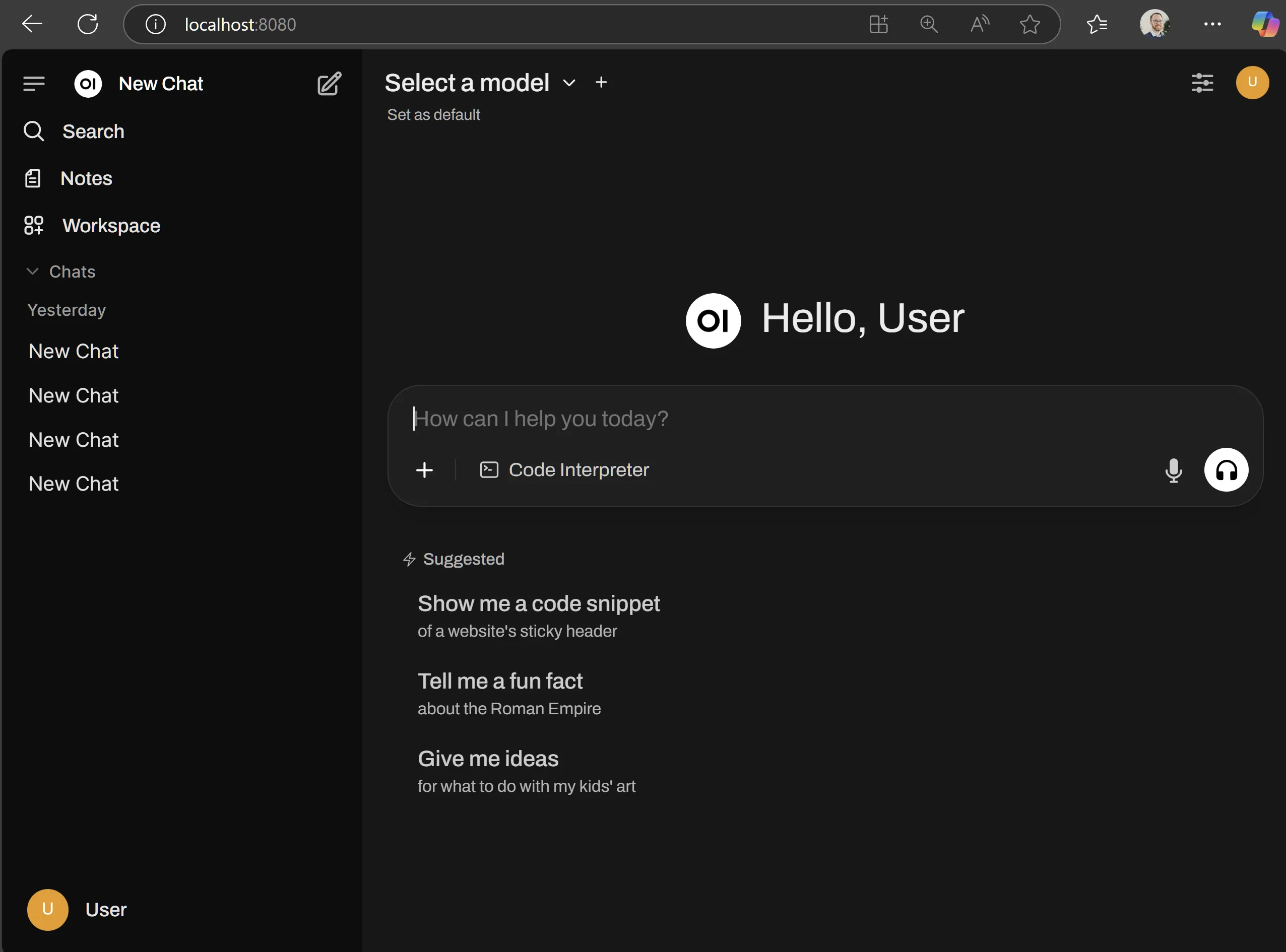

3. Start Open WebUI Server

- Run the following command to launch the Open WebUI HTTP server:

open-webui serve- In a browser, navigate to

http://localhost:8080. - Open WebUI will ask you to create a local administrator account. Once you are signed in, you will see the chat interface.

Keep the terminal window open. Closing it stops Open WebUI.

4. Connect Open WebUI to Lemonade

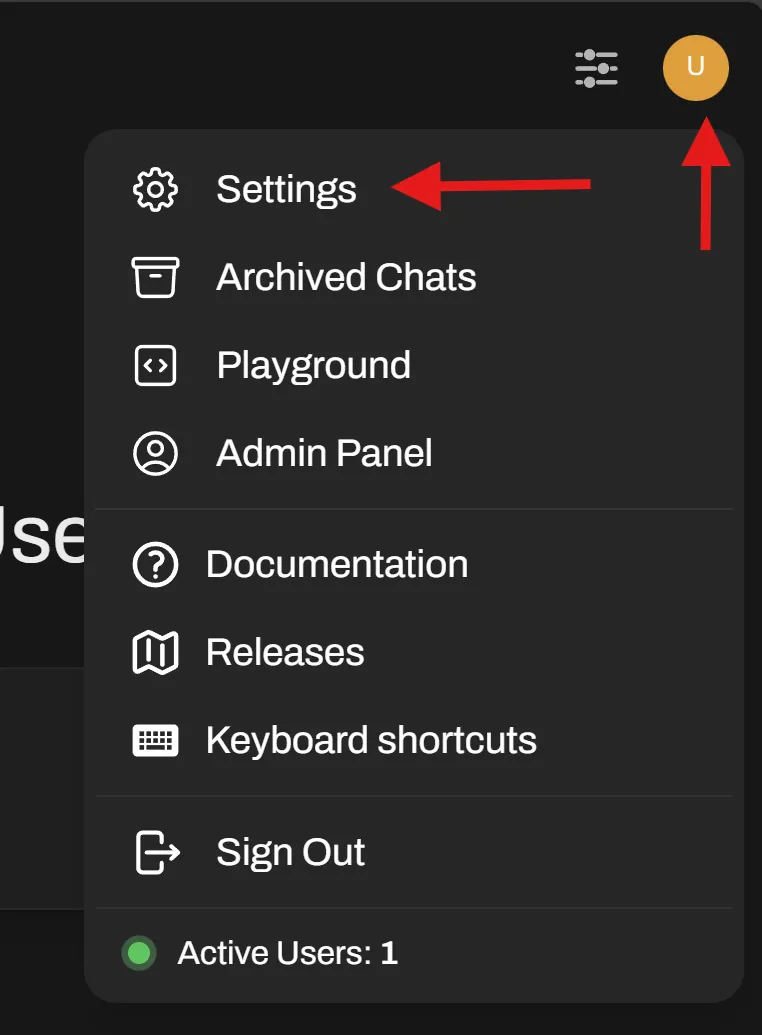

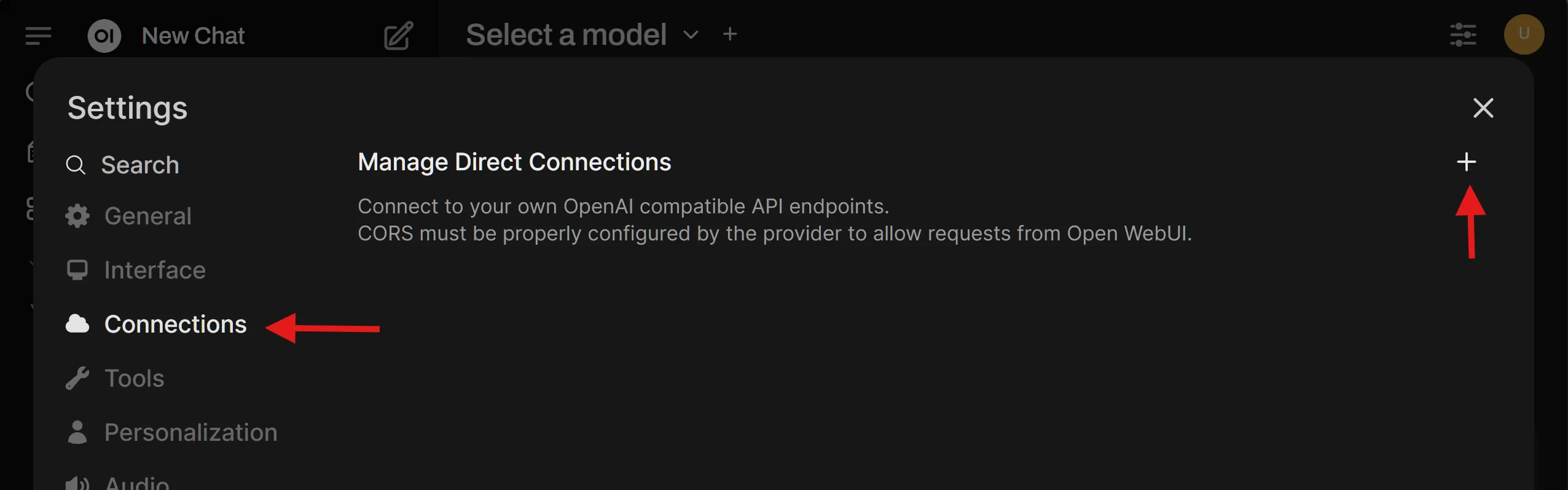

In Open WebUI:

- Go to Admin Settings → Connections (http://localhost:8080/admin/settings/connections):

-

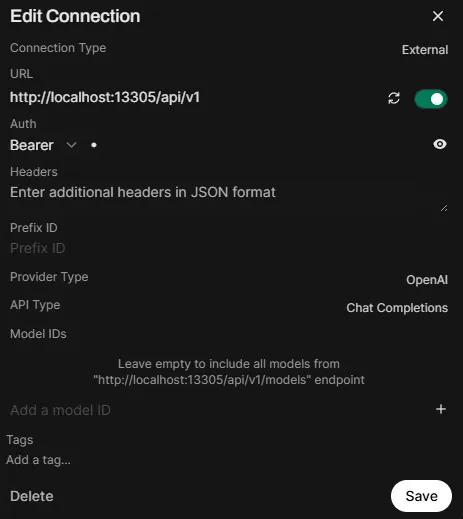

Under OpenAI API, add a new connection:

- Base URL:

http://localhost:13305/api/v1 - API Key:

-(a single dash works for local)

- Base URL:

-

In http://localhost:8080/admin/settings/connections, ensure that under “Manage OpenAI API Connections”, only

http://localhost:13305/api/v1is enabled.

-

Save

-

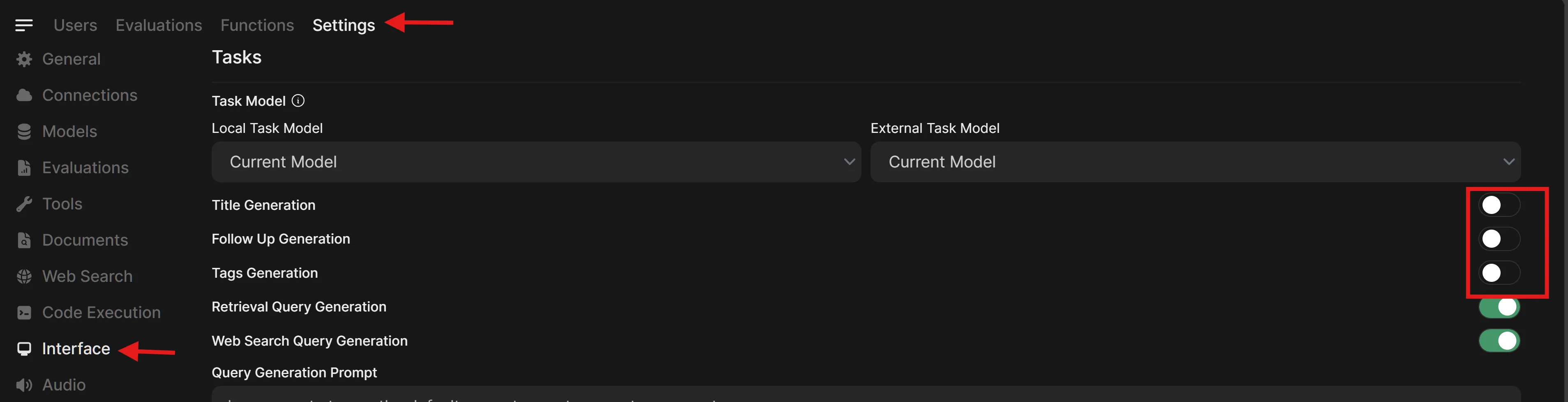

Apply the following suggested settings. These help Open WebUI be more responsive with local LLMs.

- Click the user profile button again, and choose “Admin Settings”.

- Click the “Settings” tab at the top, then “Interface” (which will be on the top or the left, depending on your window size), then disable the following:

- Title Generation

- Follow Up Generation

- Tags Generation

-

Click the “Save” button in the bottom right of the page, then return to

http://localhost:8080. -

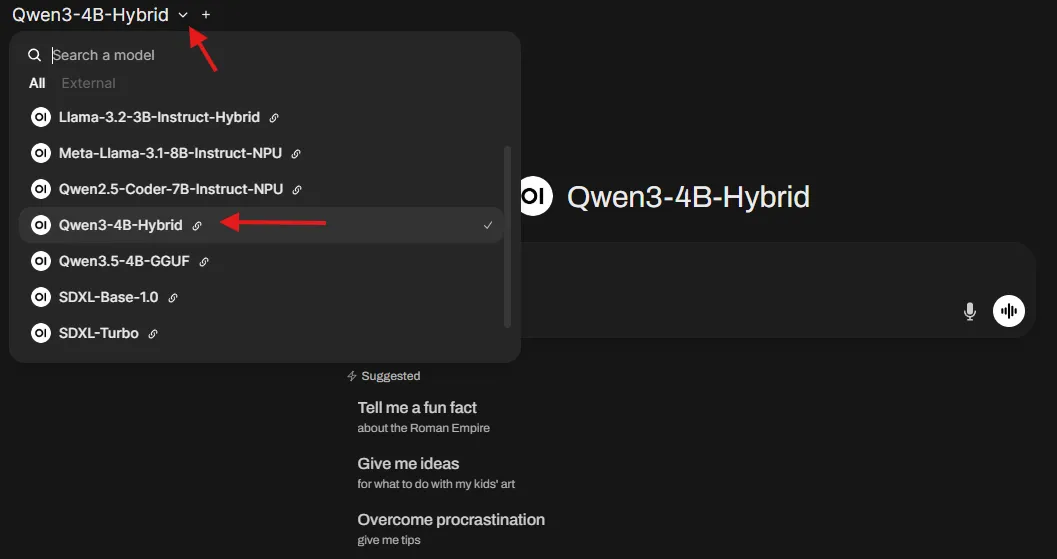

Click the model dropdown and you should see the models that you have downloaded from Lemonade.

Main Activities

Now, you’re all set up. Let’s look at three interesting things to do.

Activity 1: Chat with a Local LLM

-

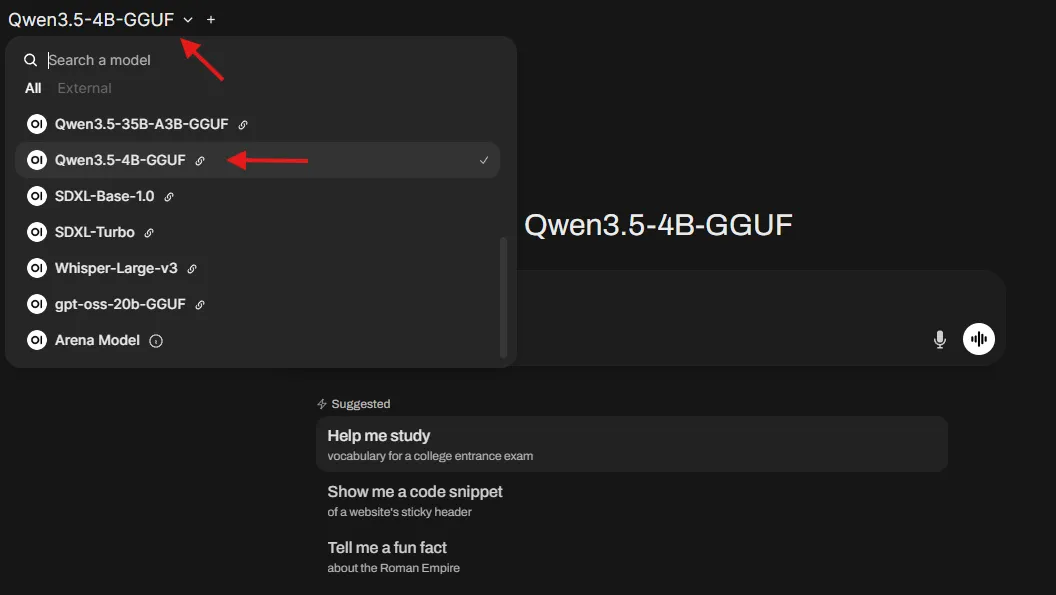

Click the dropdown menu in the top-left of the interface. This will display the Lemonade models you have installed. Select one to proceed. (example:

Qwen3-4B-Hybrid).

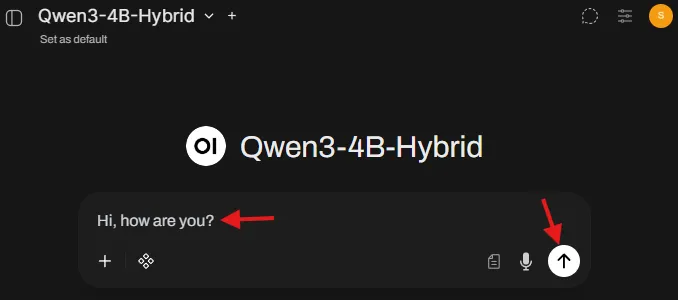

-

Enter a message to the LLM and click send (or hit Enter). The LLM will take a few seconds to load into memory and then you will see the response stream in.

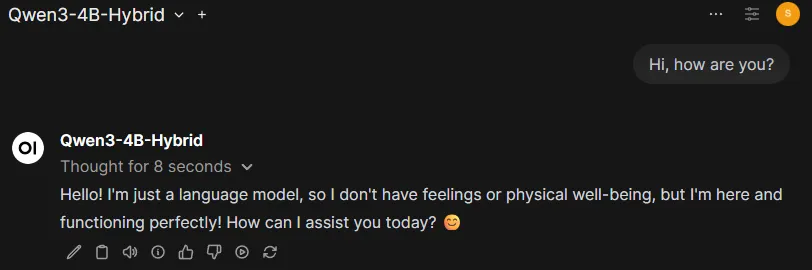

-

The model will respond in the chat.

-

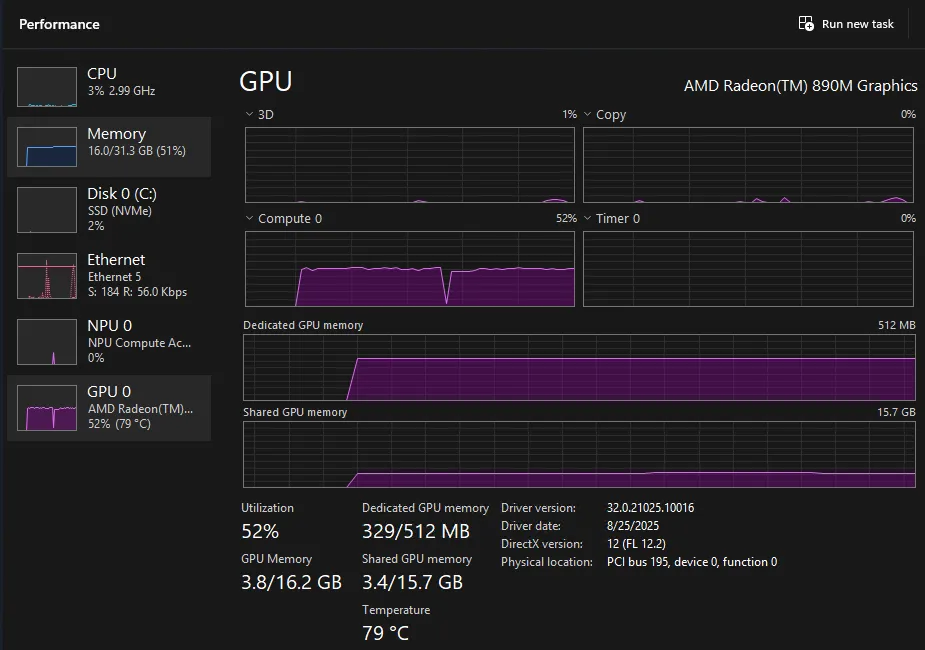

At this time, open

Task Manageron your system. You will see high GPU or NPU utilization based on whether the model you selected is Hybrid or NPU respectively. Using the task manager, you can confirm that you’re running the model locally.

-

Click the dropdown menu in the top-left of the interface. This will display the Lemonade models you have installed. Select one to proceed. (example:

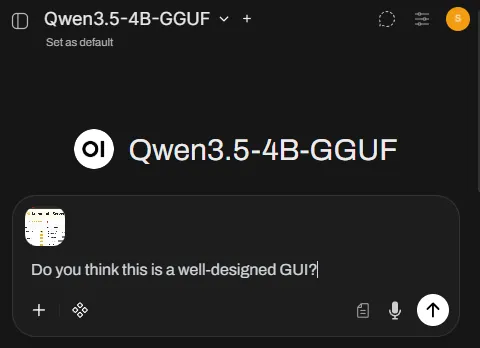

Qwen3.5-4B-GGUF).

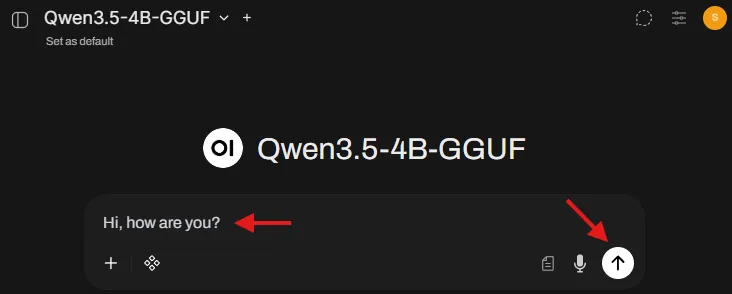

-

Enter a message to the LLM and click send (or hit Enter). The LLM will take a few seconds to load into memory and then you will see the response stream in.

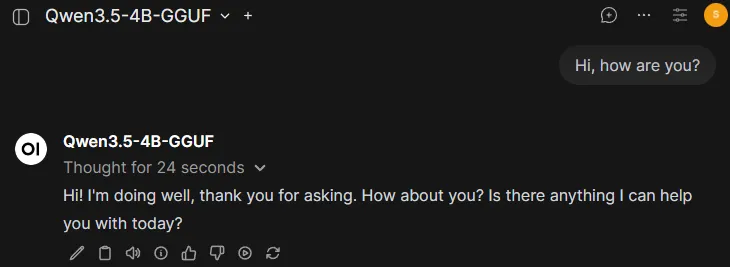

-

The model will respond in the chat.

This validates that Open WebUI can send requests to Lemonade using the OpenAI-compatible chat endpoint.

Activity 2: Upload an Image and Ask Questions (Vision)

This requires a model that supports image input (a Vision or Multimodal model).

-

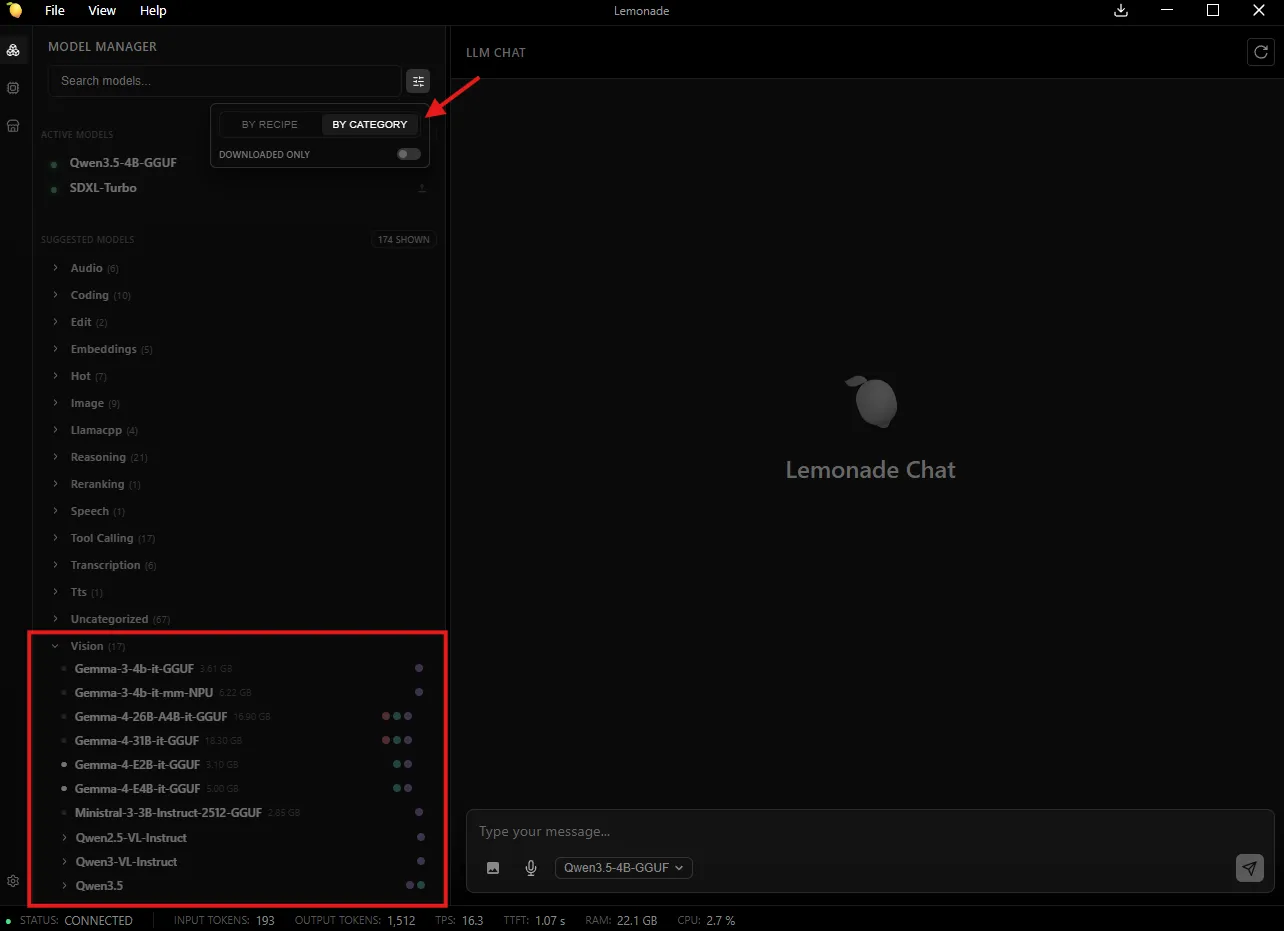

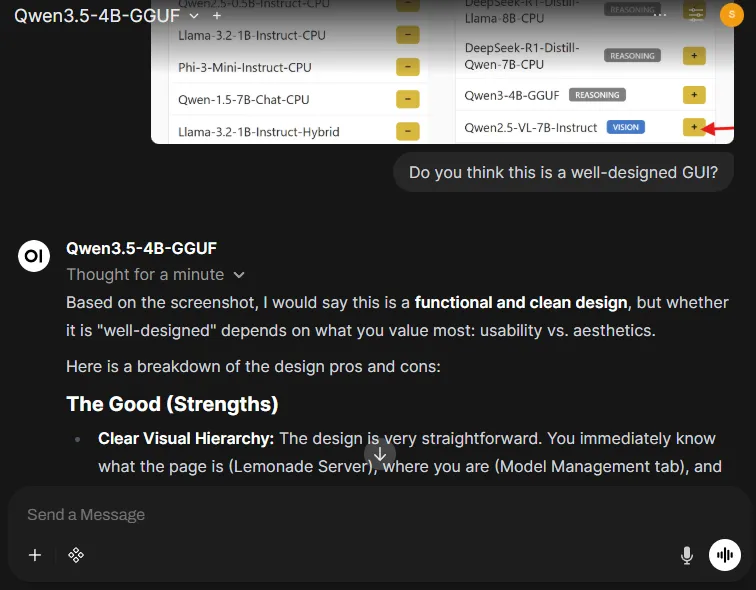

Click the filter icon, select “By Category,” then choose a model from the Vision section (e.g.,

Qwen3.5-4B-GGUF)

-

Click the

+button in the message box and upload an image -

Ask something that forces true image understanding:

Do you think this is a well-designed GUI?

-

The model answers based on the image content, not generic text.

This demonstrates that Open WebUI can send multimodal requests (text + image) through the backend (Lemonade) to a vision model.

Activity 3: Generate an Image from a Text Prompt (Stable Diffusion)

Stable Diffusion models don’t support text generation, they only generate images through the Images API.

Step 1: Configure Image Generation in Open WebUI

-

Go to Lemonade, search for

SDXL-Turbo(fast) orSDXL-Base-1.0(higher quality), and download it. -

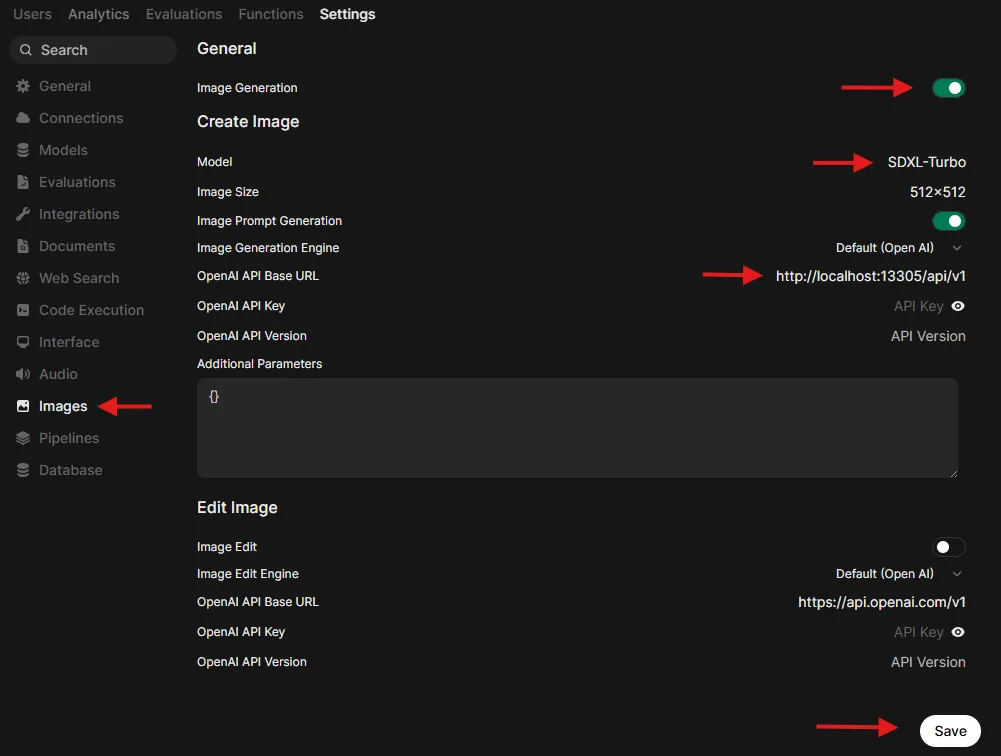

Go to Admin Settings → Images (http://localhost:8080/admin/settings/images)

-

Set:

- Image Generation: ON

- Image Generation Engine: Default (OpenAI)

- OpenAI API Base URL:

http://localhost:13305/api/v1 - OpenAI API Key:

- - Model:

SDXL-TurboorSDXL-Base-1.0

-

If you want to add more parameters, add them to the text field as JSON. For example:

{ "steps": 4, "cfg_scale": 1 }. See available parameters at Image Generation (Stable Diffusion CPP).

-

Save

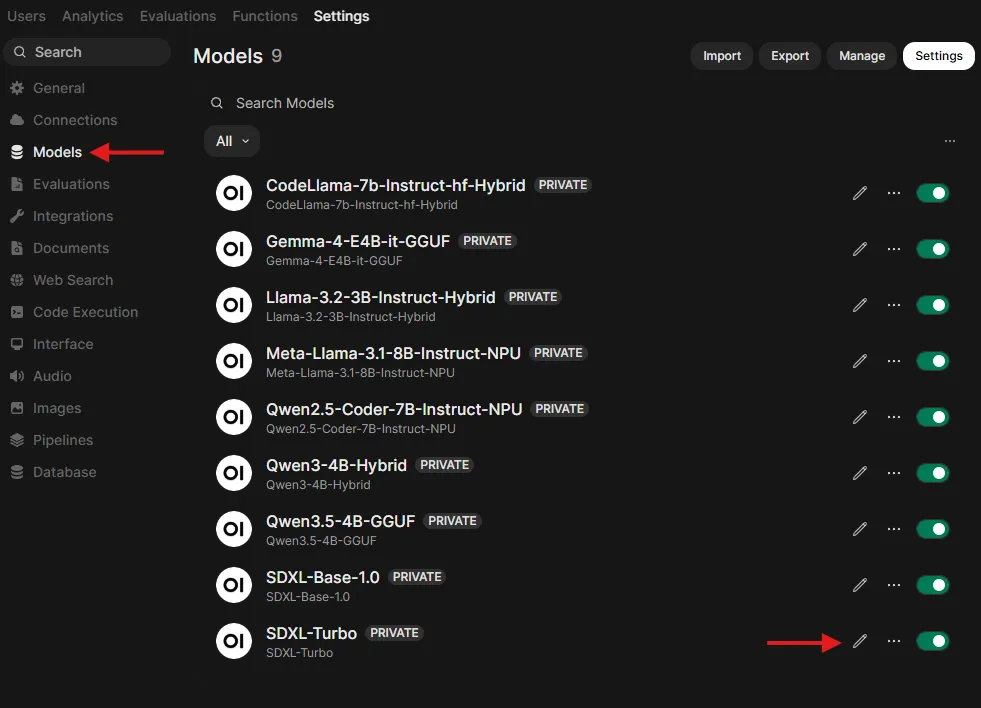

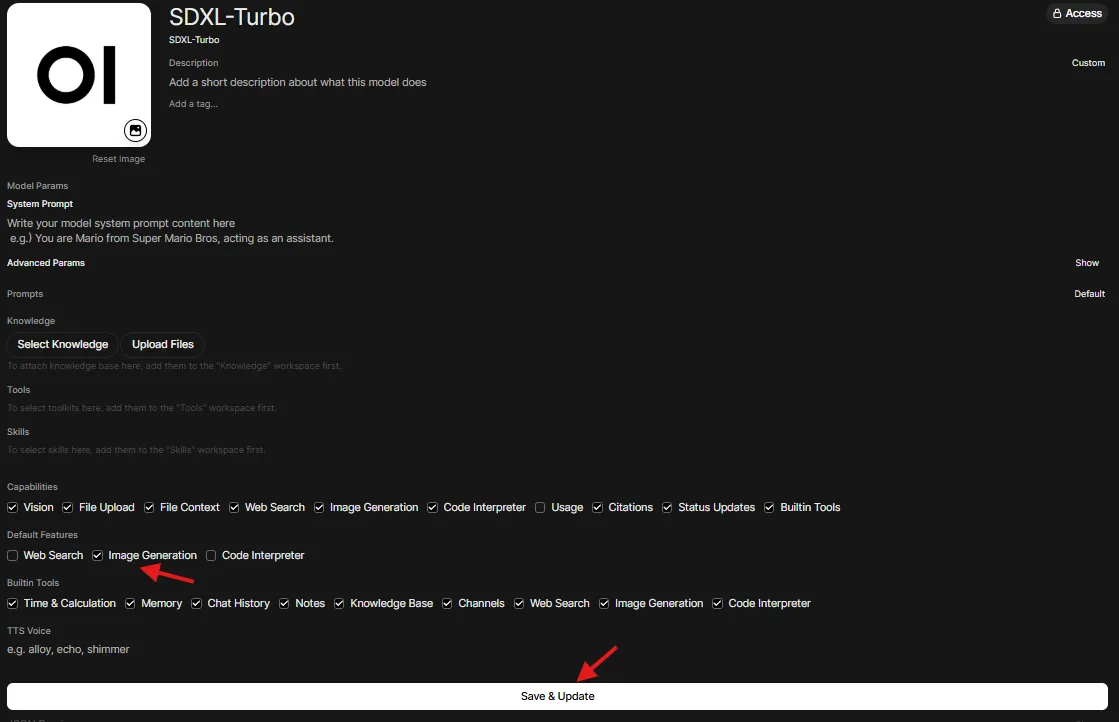

Step 2: Allow Image Generation for the model

This step ensures that you enable Image Generation as a capability for your model.

- Go to Admin Settings → Models (http://localhost:8080/admin/settings/models) and choose your model

- Turn on

Image Generation

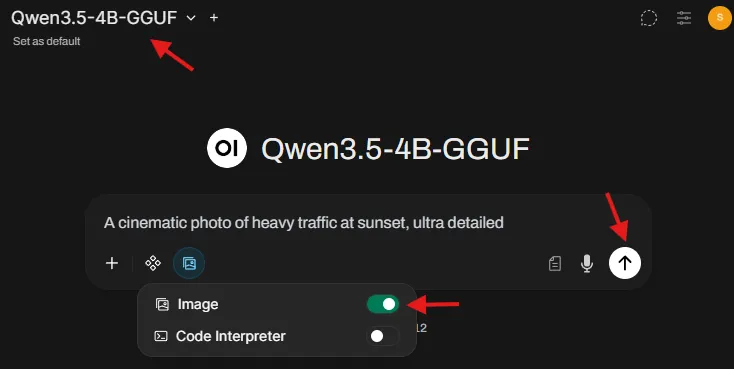

Step 3: Generate an image from the chat screen

- Go back to chat at

http://localhost:8080. - Select a Text Generation LLM in the model dropdown (example: Qwen, Llama). Do not select a Stable Diffusion model as this is a chat model selector.

- In the message area, click on Integrations, and toggle Image ON.

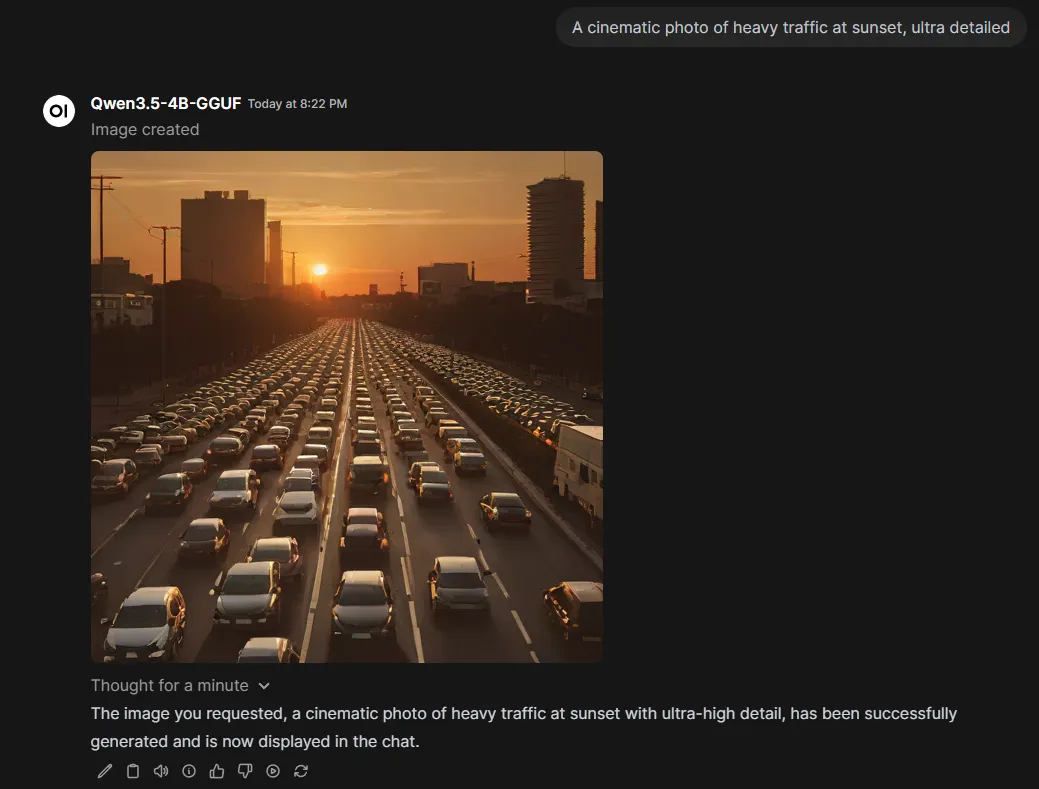

- Use a prompt like:

A cinematic photo of heavy traffic at sunset, ultra detailed. - An image is generated and appears in the chat.

This establishes that Open WebUI can coordinate a “two-part” workflow:

- The LLM helps refine the prompt

- The image is generated via Lemonade’s Images endpoint using Stable Diffusion

Troubleshooting

“No models show up”

- Confirm

http://localhost:13305/api/v1/modelsloads in a browser - Re-check Open WebUI connection Base URL:

http://localhost:13305/api/v1

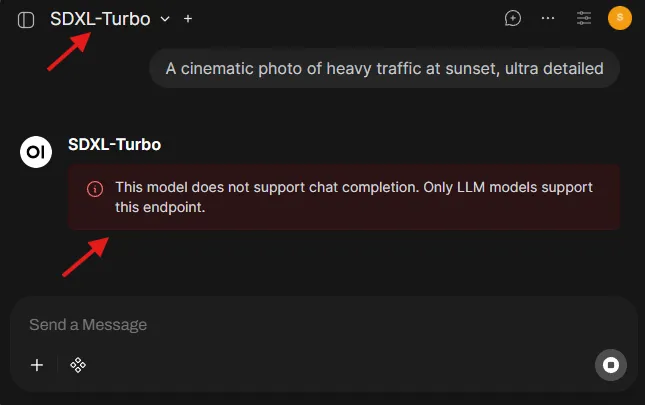

“This model does not support chat completion” error message

- You selected an image model (SDXL-Turbo / SDXL-Base-1.0) in the chat model dropdown.

- Fix: select an LLM for chat, and use the Image toggle + Images settings for generation.

Image generation errors/timeouts

- Start with

SDXL-Turbofirst (fast, fewer steps) - Once working, switch the image model to

SDXL-Base-1.0for quality

Next Steps

You now have a working “local AI stack”, a single UI controlling multiple model types through a standard API.

Here are three expansions that unlock entirely new workflows:

1. Speech-to-Text with Whisper

Try turning audio into text using a Whisper model, then feed it into an LLM for summarization, action items, or rewriting. This is the foundation for meeting notes and voice-driven assistants.

2. Python Coding inside Open WebUI

Use Open WebUI’s built-in code execution experience to run Python snippets, inspect outputs, and iterate faster—without leaving the UI. Reference

3. HTML Rendering inside Open WebUI

Render HTML outputs directly in the interface. This is surprisingly powerful for building quick prototypes, formatted reports, and interactive snippets. Reference

References

Need help with this playbook?

Run into an issue or have a question? Open a GitHub issue and our team will take a look.