- Generating images with ComfyUI and Z Image Turbo

- Automating Workflows with n8n and Local LLMs

- Fine-tune LLMs with PyTorch and AMD ROCm™ Software

- How to Chat with LLMs in Open WebUI

- Local LLM Coding with VS Code and Qwen3-Coder

- Optimized LLMs Fine-tuning with Unsloth

- Running and serving LLMs with LM Studio

- Running LLMs with PyTorch and AMD ROCm™ software

- Speech-to-Speech Translation

- Using Lemonade Across CPU, GPU, and NPU

Automating Workflows with n8n and Local LLMs

Build an AI-powered news summarizer using n8n and Lemonade.

Overview

n8n is a workflow automation platform that lets you connect apps and services using a visual node-based editor.

This playbook teaches you how to set up an AI-powered financial news summarizer that scrapes the AP News business section, extracts key headlines, and uses a local LLM running on your system to generate an investor-focused summary.

What You’ll Learn

- How to install and launch n8n

- Importing and configuring a pre-built workflow

- Connecting to Lemonade using the native n8n integration

- Understanding workflow nodes and data flow

What is Lemonade?

Lemonade is a local LLM serving platform built for AMD hardware. It provides an OpenAI-compatible API that runs entirely on your machine—your data never leaves your device.

In this playbook, we use Lemonade to serve a local LLM that n8n connects to for AI-powered tasks.

n8n includes a native Lemonade node (Lemonade Chat Model) that provides a first-class integration - no need for manual configuration. This makes connecting your local LLM to automation workflows straightforward.

Prerequisites

Lemonade

Installing Lemonade

Download the latest installer from lemonade-server.ai and run the .msi file.

After installation:

- The

lemonadeCLI is added to your system PATH automatically - Lemonade server is expected to run in the background automatically

You can also install silently from the command line:

msiexec /i lemonade-server-minimal.msi /qnUbuntu:

sudo add-apt-repository ppa:lemonade-team/stablesudo apt install lemonade-serverArch Linux (AUR):

yay -S lemonade-serverFor other distributions or to install from source, see the full installation options.

Verifying Lemonade Installation

Open a terminal and run:

lemonade --versionYou should see output like:

lemonade version x.y.zIf you see a version number, Lemonade is installed correctly and ready to go.

For quick reference, here are common Lemonade CLI commands:

| Command | What it does |

|---|---|

lemonade --help | Shows all available commands and flags. |

lemonade --version | Prints the installed Lemonade version. |

lemonade status | Confirms whether the Lemonade server is running and reachable. The default OpenAI-compatible API base URL is http://localhost:13305/api/v1. |

lemonade list | Lists models available to your Lemonade setup. |

lemonade pull <MODEL_NAME> | Downloads a model without launching it. |

lemonade run <MODEL_NAME> | Downloads the model if needed, then starts it for inference/chat. |

lemonade run <MODEL_NAME> --llamacpp rocm | Starts a llama.cpp model with the ROCm backend. |

lemonade run <MODEL_NAME> --llamacpp vulkan | Starts a llama.cpp model with the Vulkan backend. |

lemonade config | Displays the current Lemonade configuration values. |

lemonade config set llamacpp.backend=rocm | Sets the default llama.cpp backend to ROCm. |

For the latest Lemonade server options or troubleshooting, please refer to the official Lemonade documentation.

Node.js

Node.js 22.22.1 LTS is the recommended version for this platform.

- Download the Windows 64-bit Installer from nodejs.org

- Run the installer and follow the prompts

- Verify installation:

node --versionnpm --versioncurl -o- https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh | bash

# Download and install Node.js:brew install node@22

# Verify the Node.js version:node -v # Should print "v22.22.1".

# Verify npm version:npm -v # Should print "10.9.4".Lemonade

Installing Lemonade

Download the latest installer from lemonade-server.ai and run the .msi file.

After installation:

- The

lemonadeCLI is added to your system PATH automatically - Lemonade server is expected to run in the background automatically

You can also install silently from the command line:

msiexec /i lemonade-server-minimal.msi /qnUbuntu:

sudo add-apt-repository ppa:lemonade-team/stablesudo apt install lemonade-serverArch Linux (AUR):

yay -S lemonade-serverFor other distributions or to install from source, see the full installation options.

Verifying Lemonade Installation

Open a terminal and run:

lemonade --versionYou should see output like:

lemonade version x.y.zIf you see a version number, Lemonade is installed correctly and ready to go.

For quick reference, here are common Lemonade CLI commands:

| Command | What it does |

|---|---|

lemonade --help | Shows all available commands and flags. |

lemonade --version | Prints the installed Lemonade version. |

lemonade status | Confirms whether the Lemonade server is running and reachable. The default OpenAI-compatible API base URL is http://localhost:13305/api/v1. |

lemonade list | Lists models available to your Lemonade setup. |

lemonade pull <MODEL_NAME> | Downloads a model without launching it. |

lemonade run <MODEL_NAME> | Downloads the model if needed, then starts it for inference/chat. |

lemonade run <MODEL_NAME> --llamacpp rocm | Starts a llama.cpp model with the ROCm backend. |

lemonade run <MODEL_NAME> --llamacpp vulkan | Starts a llama.cpp model with the Vulkan backend. |

lemonade config | Displays the current Lemonade configuration values. |

lemonade config set llamacpp.backend=rocm | Sets the default llama.cpp backend to ROCm. |

For the latest Lemonade server options or troubleshooting, please refer to the official Lemonade documentation.

Podman

Podman is containerization software for Linux.

Step 1: Install the core Podman engine and the standalone Compose V2 parsing plugin.

sudo apt update && sudo apt install -y podman docker-compose-pluginStep 2: Enable the system-wide Podman API socket so the Compose plugin can communicate with the container runtime.

sudo systemctl enable --now podman.socketStep 3: Run a temporary test container to verify the engine can successfully pull and execute images.

sudo podman run --rm docker.io/library/hello-worldInstalling n8n

Install n8n globally using npm.

npm install -g n8nWe are now going to use Podman service to containerize our n8n installation.

Please download the following into a directory of your choice:

In that directory, run the following command:

podman compose up -dThis should install n8n and write to a persistent storage.

Launch n8n by typing localhost:5678 into your browser address bar.

Launching n8n

Start n8n from the terminal:

n8n startn8n starts a local web server. Press 'o' or Open your browser to http://localhost:5678 to access the editor.

Launching Lemonade

Lemonade is the local server that will run a model and connect to n8n. Open a terminal and run:

lemonade run gpt-oss-120b-GGUF --llamacpp vulkanlemonade run extra.gpt-oss-120b-GGUF --llamacpp vulkanlemonade run gpt-oss-20b-GGUF --llamacpp vulkanAlternatively, you can use the Lemonade GUI to choose and load a model. You can also experiment by changing to different backends, like rocm.

Setting Up the Workflow

Step 1: Sign Up or Log In to n8n

When you first open n8n, you’ll be prompted to create an account or log in:

- Open

http://localhost:5678in your browser - Create a new local account with your email, or log in if you already have one

- Once logged in, you’ll see the n8n dashboard

Step 2: Import the Workflow

We’ve provided a pre-built workflow that you can import directly:

- Download the following workflow file:

- Click Start from Scratch to open the workflow editor. Alternatively, click the + Button in the top left, and then Add workflow.

- Click the … menu (three dots) in the top right bar and select Import from file

- Select the downloaded

financial-news-workflow.jsonfile - The workflow will appear on the canvas

Step 3: Understanding the Workflow

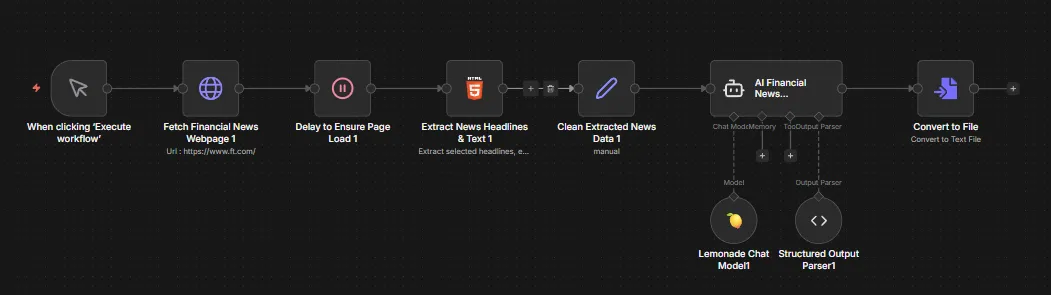

The imported workflow contains 9 connected nodes:

| Node | Purpose |

|---|---|

| When clicking ‘Execute workflow’ | Manual trigger to start the workflow |

| Fetch Financial News Webpage | HTTP GET request to https://apnews.com/business |

| Delay to Ensure Page Load | Wait node to ensure page content is fully loaded |

| Extract News Headlines & Text | HTML node that extracts headlines, editor’s picks, top stories, and regional news using CSS selectors |

| Clean Extracted News Data | Set node that combines all extracted data into a single text field |

| AI Financial News Summarizer | AI Agent that processes the news with a financial analyst system prompt |

| Lemonade Chat Model | Connects to your local Lemonade server running the LLM |

| Structured Output Parser | Formats the AI output as structured JSON |

| Convert to File | Converts the summary to a downloadable file |

Step 4: Configure Lemonade Credentials

Before running the workflow, you need to connect it to your local Lemonade server:

- Double click the Lemonade Chat Model node in n8n

- In the dropdown menu Credential to connect with select Create New Credential

- Enter the values in the table below and click save.

- Choose the relevant model that you have loaded in Lemonade Server.

| Field | Value |

|---|---|

| Base URL | http://localhost:13305/api/v1 |

| API Key | lemonade |

This workflow uses GPT-OSS-120B and it is pre-installed as

extra.gpt-oss-120b-GGUF. You can change this to other loaded models in the Lemonade Chat Model node settings.

Step 5: Test the Workflow

- Ensure Lemonade is running with a model loaded

- Click Execute workflow at the bottom center of the canvas

- Watch each node execute from left to right—they turn green when complete

- Click the AI Financial News Summarizer node to see the generated summary in the bottom pane.

- Click the Convert to File node to download the corresponding text file in the bottom pane.

Understanding the AI Agent

The AI Financial News Summarizer uses a system prompt designed for financial analysis:

You are an AI financial analyst. Your role is to read, understand, andsummarize key financial news from today. The goal is to provide investorswith a clear and concise market overview to support better investment decisions.

Investor OutlookToday's news points to [bullish/bearish/neutral] sentiment. Watch for[economic event/earnings report] tomorrow, which could influence market direction.The agent receives the cleaned news data and outputs a structured summary with market sentiment.

Saving Your Workflow

Click the workflow name at the top and rename it if desired. Workflows auto-save as you work.

Next Steps

- Schedule automation: Replace Manual Trigger with a Schedule Trigger to run daily

- Send notifications: Add a Discord, Slack, or Email node to receive summaries

- Try different models: Change the model in the Lemonade Chat Model node to experiment with different LLMs

- Customize extraction: Modify the HTML Extract node’s CSS selectors to target different news sections

- Try different backends: n8n also supports Ollama, LM Studio, and other local LLM backends

Explore n8n Templates

n8n has hundreds of pre-built workflow templates. Browse the official template library at:

Search for “AI”, “LLM”, or “automation” to find workflows you can import and customize.

For more information, check out the n8n Documentation.

Need help with this playbook?

Run into an issue or have a question? Open a GitHub issue and our team will take a look.